TL;DR

New paradigm -- Epistemic Evolution.

We propose a new view of LLM reasoning: instead of repeatedly sampling from a fixed cognitive state, models evolve distinct epistemic trajectories during inference, breaking the Artificial Hivemind phenomenon.

Framework -- PRISM pipeline.

Given a query, PRISM performs dispersion-oriented wild search to gather heterogeneous evidence, organizes retrieved concepts into an on-the-fly Epistemic Graph, and conditions generation on this structured cognitive state to produce individualized reasoning paths.

Strong performance.

PRISM achieves state-of-the-art results across three benchmark domains.

On NovelBench, PRISM improves diversity with up to +28% Distinct score gains (e.g., GPT-4o-mini).

On IdeaBench, PRISM boosts scientific hypothesis novelty by +44.4% Novelty Insight Score.

On RareBench rare-disease diagnosis, PRISM increases Recall@10 from 32.0% to 52.0% (+20 absolute points), substantially improving long-tail discovery.

Inference-time only.

PRISM requires no finetuning, retraining, or architectural modification. Diversity emerges purely from reorganizing reasoning at inference time.

Why PRISM Matters

The Artificial Hivemind

Modern LLMs appear diverse, yet their outputs increasingly converge toward similar reasoning patterns and conclusions. Across prompts, random seeds, and even different model families, generations collapse into the same high-probability responses—a semantic-level mode collapse.

This is known as the Artificial Hivemind.

When models trained on shared data optimize toward similar likelihood objectives, diversity becomes stylistic rather than epistemic. When every model thinks the same, exploration stops.

The Artificial Hivemind

Query: "Write a metaphor about time"

"Time is a river."

Same answer, every model, every time.

Why This Matters for Research

Scientific progress depends on exploring alternative hypotheses. If reasoning collapses into a single consensus trajectory:

- --unconventional explanations are never proposed,

- --minority hypotheses disappear,

- --discovery becomes constrained by probability rather than insight.

True diversity means constructing different coherent reasoning paths, not sampling noise.

Why This Matters for AI4Science

Many real-world problems are long-tail by nature:

In these domains, conservative answers are often wrong. Exploration must remain grounded in evidence, logically consistent, yet epistemically diverse.

PRISM enables structured exploration without sacrificing reliability.

Epistemic Evolution: A Paradigm for Pluralistic Reasoning

Human cognition is shaped not only by shared knowledge but by unique experiences and internal interpretations. We formalize this process as Epistemic Evolution, a paradigm describing how reasoning diversity emerges through evolving cognitive states.

Reasoning unfolds through three stages

Experiencing

Exploration

Individuals develop through exposure to diverse environments. Similarly, a reasoning system must encounter heterogeneous information. This phase prioritizes dispersion over relevance, simulating stochastic real-world experiences and expanding the cognitive search space.

Cognitive Internalization

Exploitation

Raw experience is noise until structured. Here, scattered observations are organized into a stable mental state, transforming transient evidence into structured cognitive relationships.

Contextualized Expression

Generation

The model generates responses conditioned on this individualized mental state. Outputs become synthesized perspectives rather than direct retrieval from memory. Each generation reflects a unique epistemic trajectory.

PRISM: Instantiating Epistemic Evolution in LLMs

PRISM operationalizes Epistemic Evolution through an inference-time pipeline. It modifies how reasoning unfolds, not model parameters.

Wild Search introduces stochastic lexical seeds to gather semantically diverse evidence.

Node Construction retrieved concepts are transformed into Context Nodes and Spark Nodes.

Cognitive Operators relationships between ideas are formed via analogy and conceptual blending.

Epistemic Graph the resulting graph becomes structured conditioning context.

Generation the base LLM generates responses along a unique reasoning trajectory.

Model-Agnostic

Works with any LLM

Inference-Time

No retraining needed

Safety-Preserving

No weight modification

SOTA Results

Across 3 benchmarks

Quantitative Results

PRISM achieves consistent improvements across creativity, scientific discovery, and diagnostic benchmarks -- demonstrating meaningful exploration, not random noise.

NoveltyBench

Distinct Score

IdeaBench

Novelty Insight Score

RareBench

Recall@10

The similarity between Qwen3-Vanilla and Qwen3-PRISM (0.68) is lower than between Qwen3-Vanilla and GPT-Vanilla (0.78).

PRISM induces greater divergence than the inherent differences between major model families.

Qualitative Results

Side-by-side comparisons from our appendix showing how PRISM transforms monotonous, collapsed outputs into rich, diverse perspectives.

"Time is a river."

Same response across all models

"Time is a master clockmaker's apprentice who has lost control of the workshop." -- Claude

"Time is a cosmic lung that breathes the world in and out." -- Gemini

"Time is the gray between breaths." -- Qwen3

"Time is a visa issued for a territory that is constantly being redrawn." -- Gemini

Artificial Hivemind Experiments

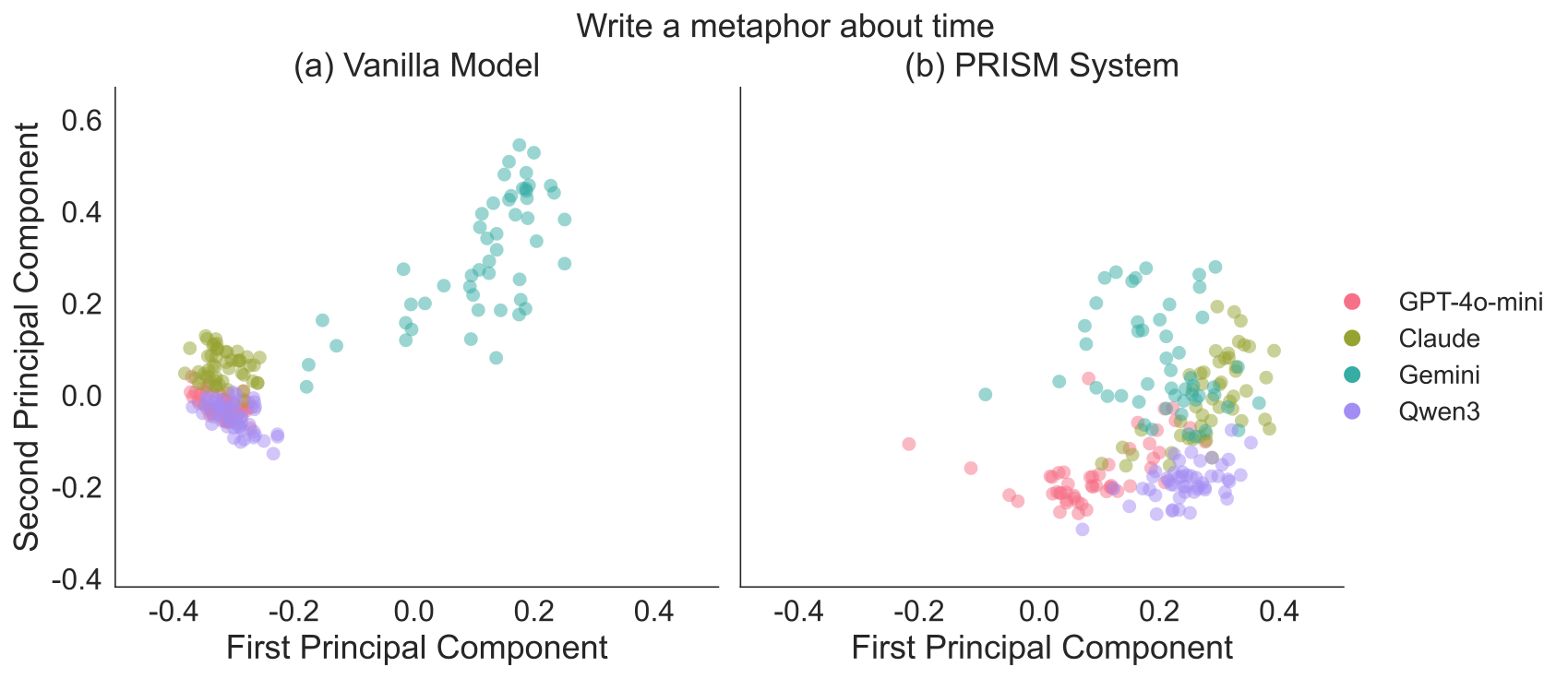

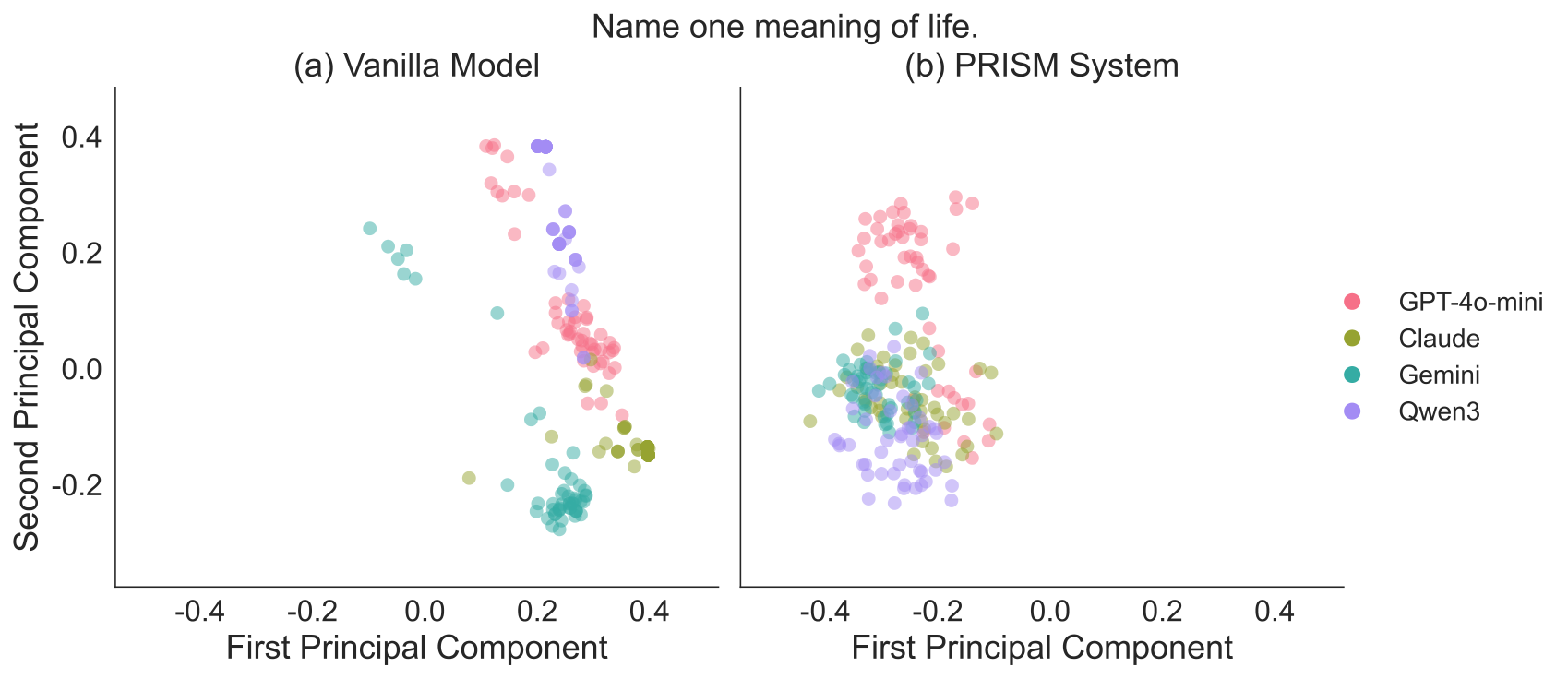

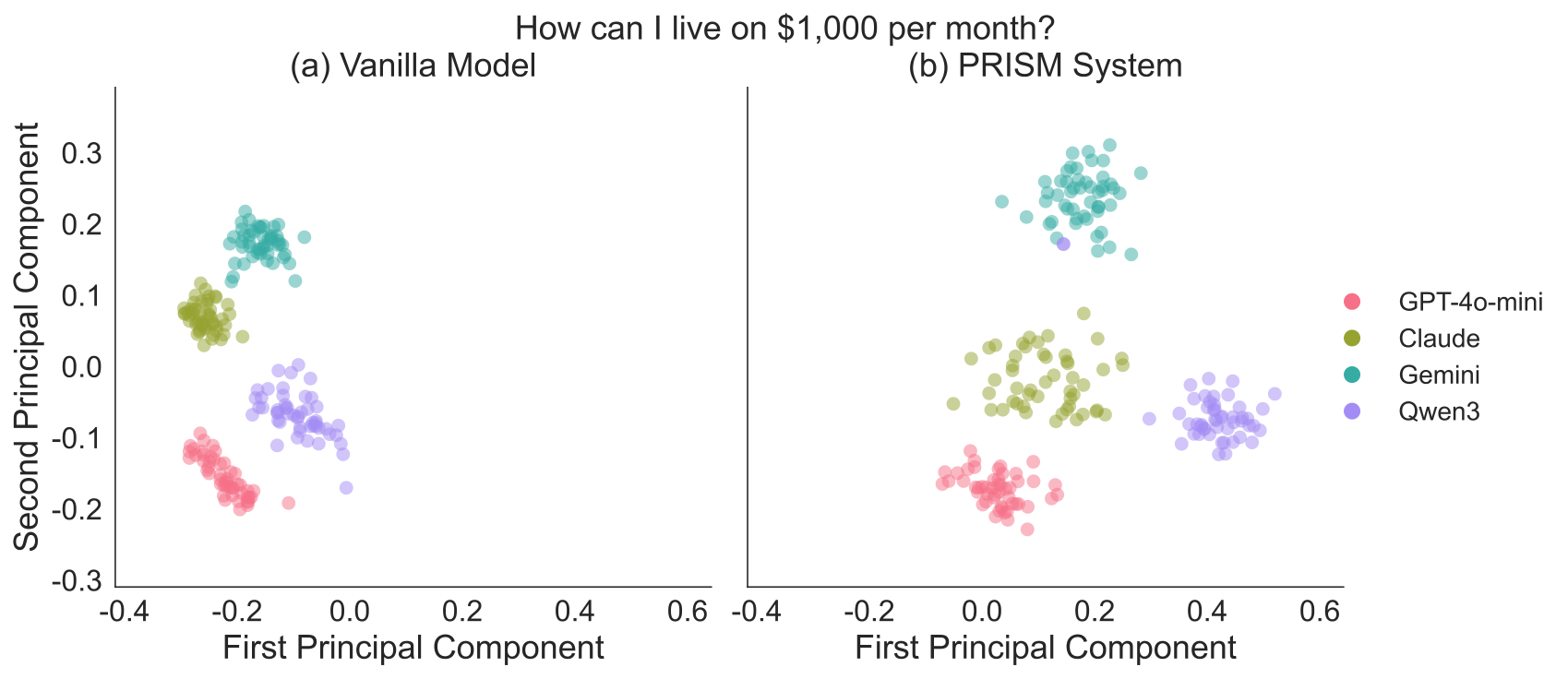

PCA visualizations of response distributions under different prompts. Each figure compares the baseline generation (left) with PRISM (right), illustrating how our method promotes more diverse and multi-centered semantic structures.

Try PRISM Yourself

We are preparing an interactive demo where you can experience PRISM firsthand -- watch any LLM refract into a spectrum of diverse perspectives.